With that let us go on to the next video where we start to define the notation we use to define these sequence problems.As far as I'm concerned there's nothing like a SplitTable or a SelectTable in PyTorch. So I hope this gives you a sense of the exciting set of problems that sequence models might be able to help you to address. So in this course you learn about sequence models are applicable, so all of these different settings. And in some of these examples only either X or only the opposite Y is a sequence. In some, both the input X and the output Y are sequences, and in that case, sometimes X and Y can have different lengths, or in this example and this example, X and Y have the same length.

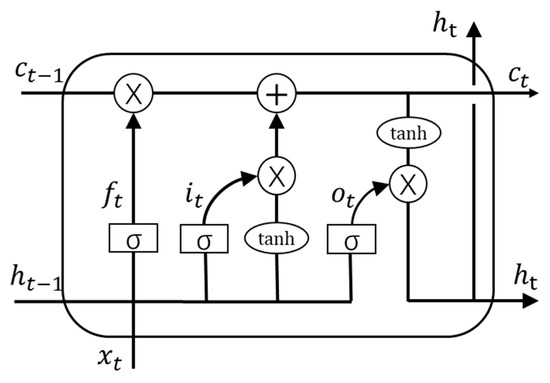

But, as you can tell from this list of examples, there are a lot of different types of sequence problems. So all of these problems can be addressed as supervised learning with label data X, Y as the training set. And in name entity recognition you might be given a sentence and asked to identify the people in that sentence. In video activity recognition you might be given a sequence of video frames and asked to recognize the activity. In machine translation you are given an input sentence, voulez-vou chante avec moi? And you're asked to output the translation in a different language. And so given a DNA sequence can you label which part of this DNA sequence say corresponds to a protein. So your DNA is represented via the four alphabets A, C, G,and T. In sentiment classification the input X is a sequence, so given the input phrase like, "There is nothing to like in this movie" how many stars do you think this review will be? Sequence models are also very useful for DNA sequence analysis. But here X can be nothing or maybe just an integer and output Y is a sequence. In this case, only the output Y is a sequence, the input can be the empty set, or it can be a single integer, maybe referring to the genre of music you want to generate or maybe the first few notes of the piece of music you want. Music generation is another example of a problem with sequence data. So sequence models such as a recurrent neural networks and other variations, you'll learn about in a little bit have been very useful for speech recognition. Both the input and the output here are sequence data, because X is an audio clip and so that plays out over time and Y, the output, is a sequence of words. In speech recognition you are given an input audio clip X and asked to map it to a text transcript Y. Let's start by looking at a few examples of where sequence models can be useful. And in this course, you learn how to build these models for yourself. Models like recurrent neural networks or RNNs have transformed speech recognition, natural language processing and other areas. In this course, you learn about sequence models, one of the most exciting areas in deep learning. Welcome to this fifth course on deep learning. It provides a pathway for you to take the definitive step in the world of AI by helping you gain the knowledge and skills to level up your career. The Deep Learning Specialization is a foundational program that will help you understand the capabilities, challenges, and consequences of deep learning and prepare you to participate in the development of leading-edge AI technology. In the fifth course of the Deep Learning Specialization, you will become familiar with sequence models and their exciting applications such as speech recognition, music synthesis, chatbots, machine translation, natural language processing (NLP), and more.īy the end, you will be able to build and train Recurrent Neural Networks (RNNs) and commonly-used variants such as GRUs and LSTMs apply RNNs to Character-level Language Modeling gain experience with natural language processing and Word Embeddings and use HuggingFace tokenizers and transformer models to solve different NLP tasks such as NER and Question Answering.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed